There is a moment in every developer’s journey with AI where they hit a wall.

You have built a chatbot. It answers questions reasonably well. It summarizes text, writes emails, maybe even runs a search. And then someone asks it to do something slightly more complicated — research a topic, write a report about it, and email it — and the whole thing collapses. The AI either hallucinates half the report, forgets what it was doing mid-task, or worse, confidently produces garbage.

That wall has a name. It is called single-agent architecture. And the field of AI has been quietly building the solution to it for the last two years. That solution is called multi-agent systems, and if you have not started paying attention to it yet, you are about to fall behind faster than you think.

What Even Is a Multi-Agent System

The simplest way to understand it is to stop thinking of AI as a single brain and start thinking of it as a company.

When a startup is tiny, one person does everything — writes the code, answers the emails, manages the servers, handles the customers. It works, barely. The moment the company starts growing, that single person becomes the bottleneck. You hire a developer, a designer, a customer success person. Each one specializes. Each one does their small piece. The product gets better because no single person is overloaded.

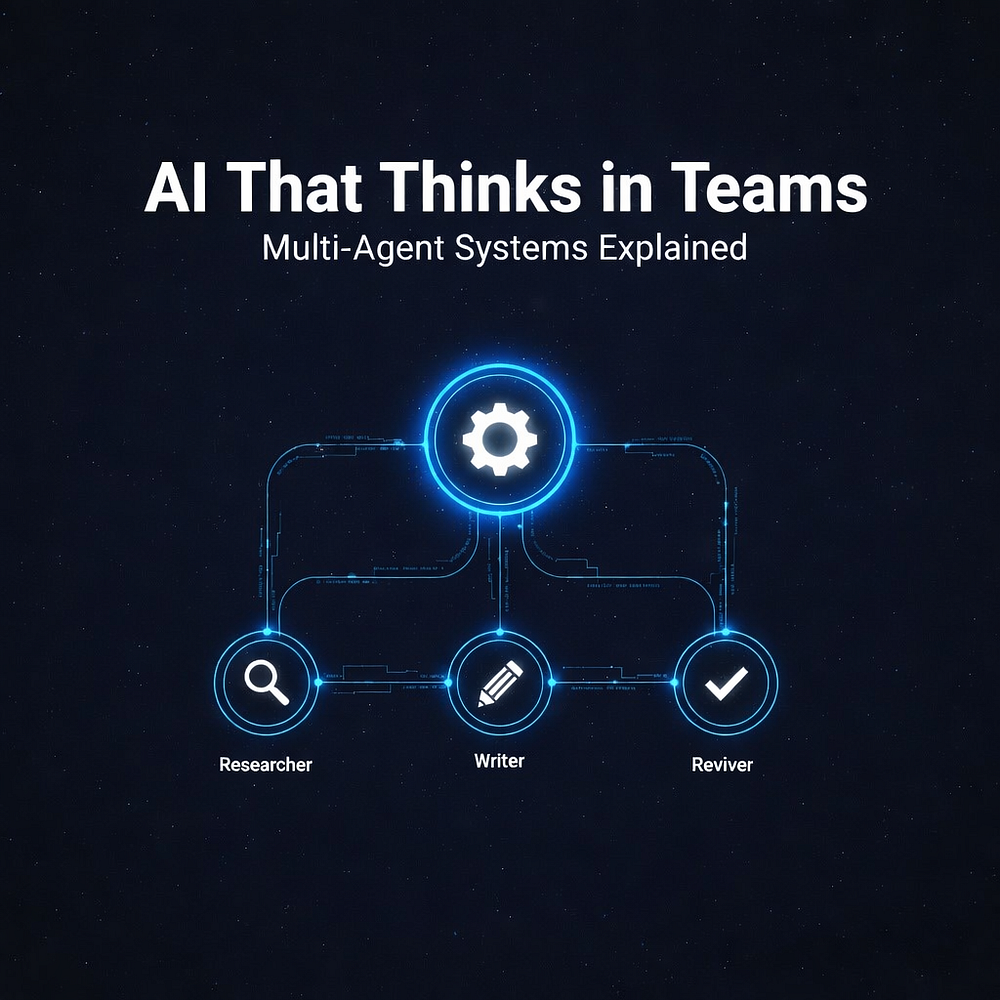

Multi-agent AI works the same way. Instead of one large language model juggling your entire task, you have multiple agents — each one focused, each one specialized — handing off work to each other, checking each other’s output, running tasks in parallel. The orchestrator says “go.” The researcher digs through documents. The writer formats the findings. The fact-checker reviews the draft. The editor polishes the language. Done.

What might take a single agent three murky attempts takes a well-structured agent team one clean pass.

This is not science fiction. It is production software in 2026.

Use Case : Recruiting / resume screening

A job board startup is already using this pattern to automate candidate screening. One agent parses a resume. A second checks it against the job description. A third scores cultural fit based on a rubric. A fourth drafts a personalized rejection or interview invite. The recruiter only sees the final decision. What used to take two hours per batch now runs in under three minutes.

Why Single Agents Break at Scale

Before going further, it is worth understanding why the single-agent approach fails. The answer comes down to context windows, attention, and cognitive load — concepts that apply to both humans and language models more than most people realize.

Every large language model has a context window, which is basically the amount of text it can hold in its attention at once. Think of it like working memory. You can hold seven things in your head at once before you start dropping details. An LLM can hold maybe 100,000 to 200,000 tokens, depending on the model — that sounds enormous until you realize a medium-length research task can easily exceed that, especially if it involves browsing multiple sources, maintaining conversation history, and tracking intermediate reasoning steps.

But context length is only part of the problem. The deeper issue is that long contexts degrade model performance. Research from multiple AI labs has confirmed that models tend to “lose” information placed in the middle of a very long context. The beginning and the end get attended to well. The middle… does not. This phenomenon has a name in research circles — the “lost in the middle” problem — and it is why a single agent trying to handle a long, multi-step task will produce inconsistent, patchy results even when the task theoretically fits in its context window.

Multi-agent systems solve this architecturally. Each agent works with a fresh, focused context. Information passes between agents as structured outputs, not raw text dumps. The system as a whole holds far more complexity than any single agent could manage, because that complexity is distributed.

The Three Frameworks Everyone is Talking About

Three frameworks have emerged as the dominant tools for building multi-agent systems right now. Each one has a different philosophy, and picking the wrong one for your project will cost you weeks.

CrewAI — The Team Metaphor

CrewAI is built around a very human idea: give your agents roles, responsibilities, and goals, then let them work together the way a team would.

You define a “crew” of agents. One might be the “Senior Research Analyst” with instructions to gather comprehensive data on a topic. Another might be the “Report Writer” whose job is to take that data and produce a structured document. A third might be the “Quality Reviewer” who checks accuracy before output is finalized. Each agent has its own backstory, its own set of tools it can use (web search, code execution, file access), and its own personality prompt that shapes how it approaches tasks.

What makes CrewAI compelling for teams new to multi-agent development is how fast you can get something working. The role-based mental model maps naturally to how humans think about collaboration. You can describe your workflow in terms of people and responsibilities, then translate that almost directly into code. The learning curve is gentle. The abstractions are sensible. For content pipelines, business automation, and workflows where the steps are clearly defined and sequential, CrewAI is probably the fastest path from idea to production.

The tradeoff is that CrewAI becomes harder to work with when your workflow needs to be truly dynamic — when the next step depends heavily on the result of the previous step in unpredictable ways. Its memory architecture is also relatively static; context does not flow between sessions unless you add an external memory layer like Mem0. For workflows that need to evolve and remember, you will need to bolt things on.

AutoGen — The Conversation Model

Microsoft’s AutoGen takes a completely different approach. Instead of roles and tasks, it structures agent interaction as conversation. Agents in AutoGen talk to each other. They propose solutions, critique those solutions, revise them, and converge on a result through iterative dialogue.

This sounds abstract until you think about the use cases it is perfect for. Code generation is the classic one. You have an agent that writes code, an agent that reviews it, an agent that tests it, and they go back and forth until the code works. The iterative, back-and-forth nature of the interaction is not a bug — it is the point. The final answer emerges through negotiation, just like how a good code review actually works in a real engineering team.

Use Case : Security / pen testing

Security teams have started using this same loop for penetration testing prep. One agent generates a list of likely attack vectors for a given codebase. A second agent tries to construct a proof-of-concept exploit for each one. A third evaluates whether the exploit actually works in a sandboxed environment. The back-and-forth continues until the team has a prioritized, validated list of real vulnerabilities — something that previously required a senior engineer and a full day.

AutoGen also has strong support for human-in-the-loop workflows, where a human can interrupt the conversation, provide feedback, and redirect the agents mid-task. This makes it unusually good for research and analytical tasks where the right answer is not known in advance and human judgment needs to stay in the loop throughout.

The complexity cost is real, though. Debugging a conversational multi-agent system is harder than debugging a linear one. When something goes wrong, it can be difficult to trace exactly which agent in which turn of the conversation introduced an error. AutoGen also tends to use more tokens than other frameworks because of the conversational overhead, which matters for cost at scale.

LangGraph — The Graph State Machine

LangGraph, built by the team behind LangChain, is the most technically demanding of the three and also the most powerful for serious production systems.

The core idea is that your agent workflow is a directed graph. Nodes are agents or actions. Edges are the paths between them. Conditional logic determines which path gets taken based on the output of each node. State is explicitly managed and persisted at every step. You can pause a workflow mid-execution, inspect its full state, resume it later, or replay it from any point.

If CrewAI is a whiteboard with sticky notes and AutoGen is a group chat, LangGraph is a state machine diagram. It is more work to set up. The mental model requires you to think like a systems engineer rather than a team manager. But what you get in return is precision, auditability, and control that the other frameworks cannot match.

Enterprise teams building compliance-sensitive workflows, financial automation, healthcare data pipelines — anything where you need to prove what the system did, why it did it, and what state it was in at every moment — will find LangGraph worth the extra investment. It is also the framework of choice when workflows are highly conditional and non-linear, where the path through the agent system changes dramatically depending on inputs that you cannot predict at design time.

A Real Scenario: How These Feel in Practice

Imagine you are building a research assistant that takes a company name and produces a comprehensive investment brief. The workflow involves pulling recent news, analyzing financial filings, summarizing competitor positioning, and writing a formatted report.

With a single agent, you prompt the LLM to do all of this. It will try. It will probably produce something that looks plausible but contains confident fabrications, missed sources, and structural inconsistencies. You will spend more time fact-checking the output than it would have taken to just write the thing yourself.

With CrewAI, you define a news researcher, a financial analyst, and a report writer. Each does its part. The report writer does not start until the other two have finished. The output is more structured, more reliable, and easier to debug. For most teams, this is where the journey should start.

With LangGraph, you build a graph where after each research step there is a validation node that checks whether the data meets a quality threshold. If it does not, the workflow loops back and tries a different source. If the financial filings agent hits a rate limit, the graph handles that gracefully and retries. The system can be paused, handed off, resumed. At any point, you can inspect exactly what state the workflow is in. For production-grade deployments with real reliability requirements, this level of control is not optional — it is necessary.

Use Case : E-commerce / product listings

The same architecture is running inside e-commerce teams right now. A product description writer, a pricing analyst, and a competitor research agent work together every morning to update thousands of product listings before the market opens. No human touches it unless the quality reviewer agent flags something below a confidence threshold. The team that used to spend three people on this task reallocated two of them to actual product work.

The Ugly Parts Nobody Talks About

Multi-agent systems are genuinely powerful, but the discourse around them tends to skip past the parts that will actually cost you time.

Token costs multiply fast. When four agents are each running their own inference calls, your costs scale with the number of agents, the complexity of each step, and the number of retries. A workflow that seems cheap in testing can become expensive in production when you account for the full conversation overhead and tool call loops.

Debugging is non-trivial. When an agent fails in a single-agent system, the failure is usually local and obvious. When an agent in a multi-agent system produces a bad intermediate output that then poisons a downstream agent’s work, tracing the root cause requires good observability tooling. LangSmith, Langfuse, and similar observability platforms have become essential companions for anyone running LangGraph or LangChain at scale. Using a framework without observability is like writing distributed systems code with no logging.

Prompt brittleness is amplified. If your base prompts are fragile — if they work in testing but drift in production — multi-agent systems make this problem worse, not better. Because agents depend on each other’s outputs, a single prompt that produces inconsistent formatting can cascade into failures downstream. Building robust systems requires disciplined prompt engineering at every node, not just at the entry point.

Loop detection is something you genuinely need to think about. AutoGen in particular can fall into conversational loops where agents keep revising without converging. Setting hard limits on the number of turns and building in explicit convergence criteria is not optional — it is hygiene.

Where This is All Going

The trajectory of multi-agent systems over the next two years points toward a few clear patterns.

Standardized agent interfaces are coming. Right now, each framework uses its own abstractions. As the space matures, you will see pressure toward common APIs, so that an agent built in CrewAI can be composed with a workflow built in LangGraph without significant glue code.

Agent marketplaces are beginning to emerge. Pre-built specialized agents — a legal document reviewer, a code security auditor, a financial data extractor — that you can drop into your own orchestration layer without building from scratch. The same way npm changed how developers think about code reuse, pre-built agents will change how teams think about workflow assembly.

Physical AI integration… this is where it gets genuinely strange and interesting. Multi-agent architectures are increasingly showing up not just in software pipelines but in control systems for robots, drones, and autonomous equipment. The same pattern of specialized agents coordinating through an orchestrator that works for a research report pipeline also works for a warehouse robot that needs to coordinate sensing, navigation, and manipulation as separate cognitive subsystems. The frameworks being built today for software automation will likely become the nervous systems of physical automation within the next five years.

Use Case : Healthcare / diagnostics

Healthcare is quietly becoming one of the most interesting deployment environments. Diagnostic pipelines where one agent processes lab results, another cross-references symptom history, and a third checks for drug interaction risks are already in trials at several hospital systems. The human doctor remains the decision-maker, but the cognitive load of aggregating that information… that part is being handed off. The implications for both productivity and liability are going to force some very interesting conversations over the next few years.

Where to Start Without Losing Your Mind

If you have never built a multi-agent system before, the honest advice is to start with CrewAI and a small, well-defined workflow. Pick something boring — an internal tool, a content pipeline, an email summarizer — and build it with two agents instead of one. Feel the difference. Understand the failure modes. Learn how agents pass information to each other.

From there, add LangGraph when you need state management and conditional branching. Add AutoGen when your workflow is fundamentally about iterative refinement. Use observability tools from the very first deployment, not as an afterthought.

The field is moving fast. LangGraph has seen some of the most significant developer adoption growth in the 2025 Stack Overflow developer survey. GitHub repositories around agent frameworks have accumulated tens of thousands of stars in under two years. The infrastructure for building these systems is maturing at a pace that suggests multi-agent orchestration will be a standard engineering skill within the next 18 to 24 months, not an exotic research topic.

Single agents were the proof of concept. Multi-agent systems are the architecture that makes AI actually work at the complexity level real software requires.

Your chatbot does not need to be smarter. It needs a team.